So first let me give you some facts on the load on our internet line at NPF#13, we had an average load of 600Mbps and a peak of just over 1Gbps, which caused some latency spikes saturday evening after the stage show. The number of participants was 1200 people and we had a single pfSense routing the traffic, with a second ready as backup. With these fact in mind and a ticket sale of 2200 for NPF#14, which is a 83% increase from NPF#13, we would expect that 2Gbps on the internet line should be sufficient. More precise my expectation on the load was approximately 1.1Gbps on average and a peak of about 1.8Gbps, but as we’ll see in a bit this was way off the actual measured values.

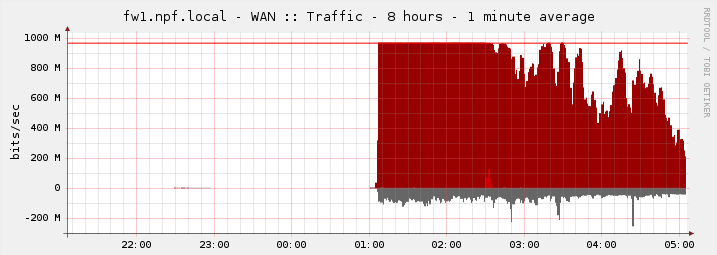

Luckily we had four 1Gbps lines, well due to time pressure and my underestimation of number of crew (network technicians) needed we only had single line up and running at the time of opening. But my hope was that we in time could get the other lines up and running before the internet bandwidth became a problem. Unfortunately due to other problems with network it wasn’t until 9 o’clock I had time to work on the second gigabit line and by that time the first line was under pressure especially after the stage show and at the start of the tournaments.

This unfortunately forced the game admins of League of Legends and Counter Strike: Global Offensive to postpone the tournaments until we had more bandwidth as Riot Games (League of Legends) and Valve (Counter Strike: Global Offensive) had released updates during that evening and people weren’t able to download them fast enough. After spending the better part of 4 hours – with a lot of interruptions – I finally got the second gigabit line up and running. This is where the funny part comes, namely that it took less than 30 seconds before the second line was fully utilized. People luckily did however report that there speedtests on http://speedtest.net/ increased from 0.4Mbps to approximately 25Mbps.

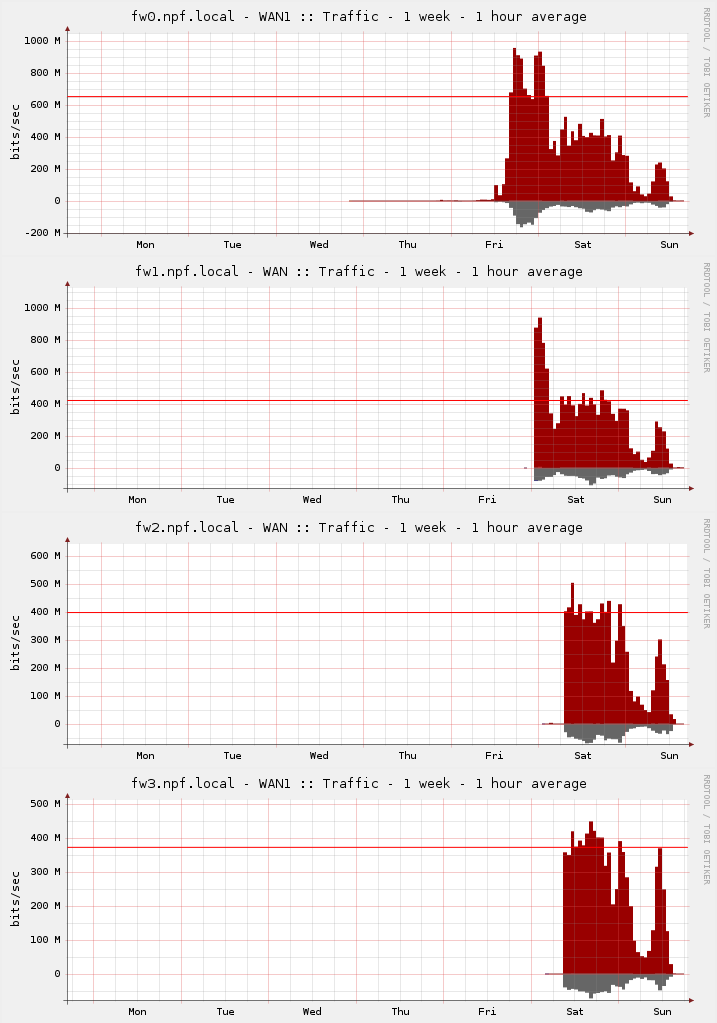

This however didn’t solve the bandwidth problem completely, and I started setting the third and fourth pfSenses up, however with one major difference namely that we, BitNissen and I, found that it’s possible to extract the configuration of one pfSense and load it into another pfSense. All that there were left for us to do was to make some adjustments to the public IP values, this didn’t succeeded in the first attempt because we missed some things which forced us to do some manual troubleshooting to correct the mistakes. We learned from these mistakes and the fourth pfSense was configured in less 10 minuttes. By the time we got to this point it was 4 o’clock in morning and the load on the WAN links had dropped considerably, therefore I decided to get a couple of hours sleep before connecting it to our network, before the tournaments were set start again (saturday 10 o’clock). Around 9:30 the last two pfSenses were connected to our network and from that point on we didn’t have any problem with the internet bandwidth. As mentioned in another blog, NPF#14: Network for 2200 people, we ended up having 23TB of traffic in total on the WAN interfaces of our core switches, and that is in only 47 hours. Below you can see the average load on the four WAN links.

Finally I will just mention that we saturday evening measured a bandwidth load peak of 2.8Gbps and on average use just over 2.1-2.2Gbps during normal gaming hours, which was a lot more than originally expected. So I’m guessing next year that we’ll need a 10Gbps or large line in order not to run out of bandwidth, if we choose to expand further.